How's Linear so fast? A technical breakdown

A few milliseconds is all it takes to update an issue in Linear. A traditional CRUD app doing the same thing takes about 300ms. How do they do it? There's no secret silver bullet to performance. The reality is that it's built from the ground up on the right foundation, then improved by countless decisions. My goal is to walk through some of the techniques that make Linear feel the way it does and help you implement the same.

What I'll cover

Inverting the server

Making the first load feel instant

The sync engine

Designed for speed

The animation layer

A quick disclaimer: I've never worked at Linear and have never seen their code. Everything here comes from inspecting the app, reading their blog posts, or watching their conference talks.

Inverting the server

Most web apps live inside the same loop. The user clicks. The browser fires an HTTP request. A server queries a database, formats a response, sends it back. The browser repaints. The end result is a spinner, a skeleton, or a frozen UI for a few hundred milliseconds while the app waits on the network.

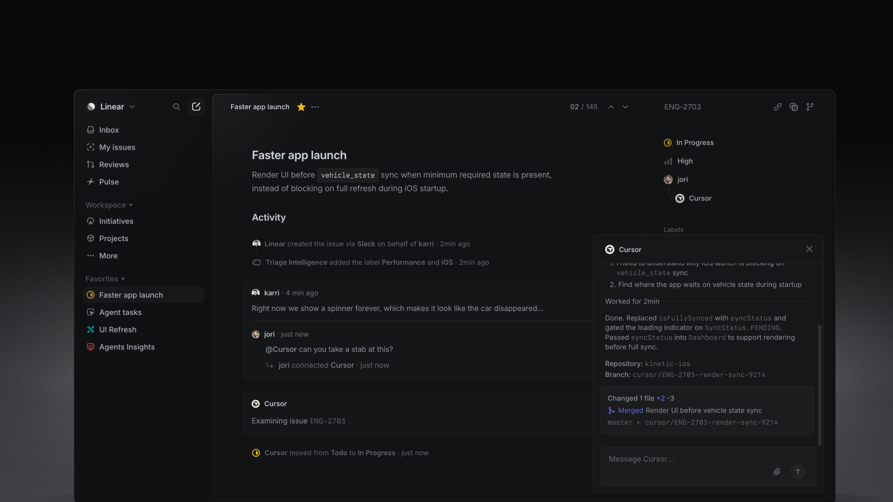

Linear inverts the relationship. The actual database the UI reads from is in the browser, in IndexedDB. Mutations apply locally first, then asynchronously push to the server, which broadcasts deltas back to other clients via WebSocket.

This is the most critical piece to Linear's speed . When building a fast web app the biggest bottleneck you will fight is the network. Any data sent between the client and server will cost you hundreds of milliseconds. The best approach is to elminate the need for a network request entirely: which is exactly what Linear does.

// A traditional web app updating the server

async function updateIssue({ issue }) {

showSpinner();

const response = await fetch(`/api/issues/${issue.id}`, {

method: "PATCH",

body: JSON.stringify({ title: issue.title }),

});

const updated = await response.json();

setIssue(updated)

hideSpinner();

}

// vs Linear

issue.title = "Faster app launch";

issue.save();The first line, issue.title = "Faster app launch", mutates an in-memory MobX observable . The second queues a transaction that their sync engine batches and flushes to the server. The UI re-renders synchronously off the local mutation, before the network has done anything at all. There are no spinners because there is nothing to wait for.

Tuomas, one of Linear's co-founders, said this at a conference in 2024: 'Literally the first lines of code that I wrote was the sync engine, which is very uncommon to what you usually do when you're a startup.' From day one, Linear was built for speed, and they've kept the promise ever since.

I know most people won't build a custom sync engine like Linear just to make their app feel fast, and they don't need to. Libraries like React Query and SWR can get surprisingly close with optimistic updates. Most web apps feel slow because the UI waits for the network before updating state:

// optimistic mutation with swr

mutate(

`/api/issues/${issue.id}`,

{ ...issue, title: "Faster app launch" },

false

);

// vs Linear

issue.title = "Faster app launch";

issue.save();The key idea is simple: UI responsiveness should not depend on network latency. Users perceive speed based on how quickly the interface reacts, not how quickly the server responds.

Optmistic requests is one of the highest leverage improvements you can make:

eliminate unnecessary spinners

update state immediately

validate in the background

rollback only if needed

Not every app needs real-time sync. Every app needs speed.

A peek into Linear's stack

Linear is built on the simplest stacks you can find: React, TypeScript, MobX, Postgres, a CDN. There's no edge database, no React Server Components, or no fancy framework.

Frontend

React 18+

MobX (observable graph, granular re-renders)

TypeScript

Rolldown-Vite + plugin-react-oxc(mid-2025; previously Rollup; previously Parcel)

ProseMirror (rich text editor, high-confidence inference)

Radix UI primitives (popovers, menus, focus traps)

Emotion/styled-components (CSS-in-JS)

Comlink (Worker RPC)

Inter Variable + Berkeley Mono (single woff2 each, font-display: swap)

Backend

Node.js + TypeScript (single language for all server code)

PostgreSQL on Cloud SQL (issues table partitioned 300 ways)

Memorystore Redis (event bus + cache + sync cursors)

pgvector (similar-issue detection, OpenAI ada embeddings)

Kubernetes on GCP (one workload per concern)

Cloudflare Workers (multi-region edge proxy)

Other clients

Desktop: Electron (same web JS, native chrome)

Mobile: Swift (iOS) + Kotlin (a separate full reimplementation)

Marketing

Nextjs (static)

styled-components

Inline svg spriteThe biggest standout to me is their decision to stick with client-side rendering. CSR often gets criticized for slow initial loads, but with the right architecture it can feel nearly instant.

I'm also a big fan of the simplicity it brings. Keeping the app entirely client-side creates a much cleaner mental model and removes a lot of the complexity and overhead that comes with server-rendered apps.

So how does Linear make it their client side rendered app feel instant?

Making the first load feel instant

One thing I obsess over is the first load, and Linear clearly does as well. For productivity software especially, the time it takes before you can actually start working is one of the most important UX metrics.

A lot of people assume client-side rendered apps have to feel slow on startup, but that's not necessarily true. The key is understanding that perceived performance matters more than raw load time.

If the interface becomes interactive immediately, progressively fills in data, and avoids blocking the user behind loaders and spinners, the app can feel instant even while work is still happening in the background.

The bundler arc: Parcel, Rollup, Vite, Rolldown

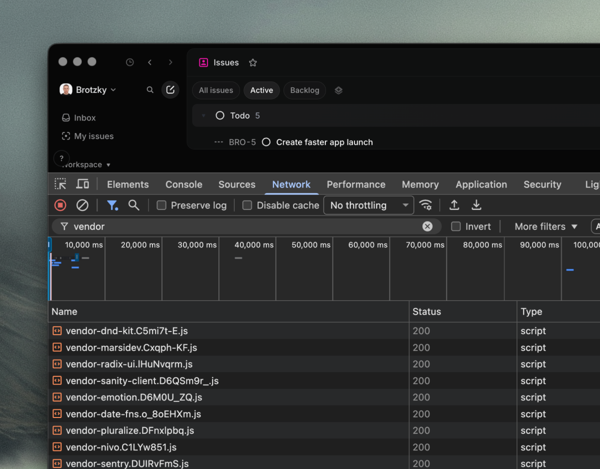

The first step to making an app feel instant happens long before runtime. It starts at build time. Again, the network is the bottleneck, so shipping the least amount of JavaScript and CSS is critical to fast load times.

Linear has rewritten its build pipeline four times: Parcel → Rollup → Vite → Rolldown. Each migration was driven by the same goal: reduce the amount of work the browser has to do before the app becomes interactive. From their own blog posts they claim:

50% less code shipped.

30% smaller after compression.

Cold-cache page loads got 10 to 30% faster.

Time-to-first-paint of the active-issues view dropped 59% on Safari.

Memory usage dropped 70 to 80%

Most of that came from a combination of decisions Linear's changelog calls out together: targeting only modern browsers, better dead-code elimination, and aggressive code splitting. Dropping legacy support is the big win (no polyfills, no ES5 transpilation, no nomodule fallback) but the dead-code and chunking work matters just as much.

Even with all of these optimizations, the Linear still ships a substantial amount of code: roughly 21 MB of minified JavaScript and 40 MB of WebAssembly. The difference is that it's aggressively partitioned into hundreds of route-level chunks that are fetched on demand.

// vite.config.ts (reconstruction; matches observed chunk graph)

export default defineConfig({

plugins: [react()],

build: {

target: "esnext", // no legacy syntax, no polyfills

cssMinify: "lightningcss",

modulePreload: { polyfill: false },

rollupOptions: {

output: {

// One chunk per npm package > ~3 KB. Cache invalidation

// becomes per-library instead of per-app-revision.

manualChunks(id) {

if (id.includes("node_modules")) {

const pkg = id.match(/node_modules\/([^/]+)/)?.[1];

if (pkg) return `vendor-${pkg}`;

}

},

},

},

},

});The lesson isn't which bundler to pick. The sequence is the lesson: drop legacy browsers, go native ESM, pick a modern bundler, move to a Rust toolchain. Each step is small. Stacked, they cut Linear's first-load JavaScript roughly in half and their build time by an order of magnitude.

Preloading the critical path

Splitting the bundle into hundreds of chunks creates a new problem. Each chunk imports other chunks, and the browser doesn't know what those are until it parses the entry script. Without help, the cold-load timeline becomes a waterfall: fetch the entry, parse it, fetch its imports, parse those, fetch their imports. Every level adds a network round-trip.

Before any JavaScript runs, the browser sees the list and fires off the requests in parallel. By the time the entry script reaches its first import, the chunks are already in cache.

<script type=module crossorigin

src="https://static.linear.app/client/assets/html.2_JBQs3Q.js"></script>

<link rel=modulepreload crossorigin

href="https://static.linear.app/client/assets/vendor-mobx.Crhy2qQc.js">

<link rel=modulepreload crossorigin

href="https://static.linear.app/client/assets/SyncWebSocket.Djw6l_Op.js">

<link rel=modulepreload crossorigin

href="https://static.linear.app/client/assets/DatabaseManager.DKssGAN8.js">

<!-- ...around many more -->The crossorigin attribute on each preload matches the crossorigin on the entry script, so the browser reuses the cached fetch instead of treating preload and import as separate resources. Same trick as the font preload, applied to every chunk on the critical path.

The cold-load timeline collapses from a sequential waterfall into a single parallel batch. The network still does the work. It just does it all at once. The beauty of this technique is you're able to do all this work in the background when the user first hits the login page. In a few seconds the full app is stored in cache and served instantly.

The service worker

That handles the boot. The rest of the app, the route-level chunks for views the user hasn't visited yet, gets cached in the background by a service worker. The worker has a precache manifest baked into its source, around 1,200 hashed assets covering route chunks, icons, and fonts, and pulls them down lazily after the page is interactive. Within a few seconds of hitting the login screen, the full app is sitting in cache.

This buys two things. Subsequent navigations skip the network entirely; the service worker answers directly from its cache without even going through the HTTP cache's lookup path. And the app keeps working when the network doesn't. Combined with the local-first sync engine (which already has the user's data in IndexedDB), Linear is usable offline. You can read issues, create new ones, edit titles and descriptions, change statuses. Everything queues in the local transaction store and flushes the next time the connection comes back.

Modulepreload is for what the app needs now, parallel-fetched so the browser never blocks on a serial import chain. The service worker is for what the app needs next.

When you're building an app always consider the size of your JavaScript and CSS bundles. The best techinque is to elminate as much code as possible, split it into small pieces, and precache it in the background.

Bundle composition

I found it interesting that every npm package gets its own chunk and its own hash, cached independently. A traditional vendor.js invalidates the entire dependency graph on any bump. Linear's chunking turns vendor caching from binary to fine-grained. Bumping a single dependency invalidates one chunk; the rest stay cached.

Fonts

Font loading is one of those details a lot of apps get wrong. The failure modes are visible: invisible text for half a second, layout shifts as the real font swaps in, double-fetched resources because the preload didn't match. Linear's setup avoids all three:

<!-- in <head> of index.html -->

<link rel="preload"

href="https://static.linear.app/fonts/InterVariable.woff2?v=4.1"

as="font" type="font/woff2" crossorigin="anonymous">

<link rel="preconnect" href="https://static.linear.app" crossorigin>@font-face {

font-family: "Inter Variable";

font-weight: 100 900;

font-display: swap;

src: url(https://static.linear.app/fonts/InterVariable.woff2?v=4.1)

format("woff2");

}

/* Italic and Berkeley Mono follow the same shape, single woff2 each. */Variable fonts cover the full 100–900 weight axis in a single woff2, eliminating per-weight requests. font-display: swap renders the fallback stack immediately and swaps to Inter when it loads. The trick that's easy to miss: crossorigin="anonymous" on the preload tag. Without it, the browser preloads the font, then fetches it again when CSS later references it, because the two requests have different CORS modes. crossorigin on the preload makes the browser reuse the cached one.

This all seems simple, but I'm always surprsied at how many apps load fonts incorrectly. Linear is a great example of thinking through the details and ensuring font loading is as fast as possible.

The loader shell

Another key tehcnique to make the first load feel fast: Inlined in <head> is just enough CSS to paint the loading state with no external stylesheet fetched. Remember, the network is the bottleneck and what you'll always be fighting to make your app feel fast. In this case, Linear elminates a network request depdendcy by inlining the critical CSS required to show the user an app shell.

<style>

:root {

--bg-color: #f5f5f5;

--bg-base-color: #fcfcfd;

--bg-border-color: #e0e0e0;

--sidebar-width: 244px;

}

html { background: var(--bg-color); height: 100%; }

body { font-family: "Inter Variable", Arial, Helvetica, sans-serif; }

#appBorders {

border: 1px solid var(--bg-border-color);

background: var(--bg-base-color);

margin: 8px 8px 8px var(--sidebar-width);

border-radius: 12px;

}

#logo { transform: translateZ(0); }

@keyframes logoBackgroundPulse {

0% { opacity: 0; transform: scale(0.8); }

70% { opacity: 1; }

100% { opacity: 0; transform: scale(1.0); }

}

</style>

<script>performance.mark("appStart");</script>Beyond CSS there is also a bunch of inline JavaScript:

Before any bundle has parsed, it reads localStorage.splashScreenConfig, merges any sessionStorage override on top, and applies the user's remembered shell tokens directly to document.documentElement.style: sidebar background, base color, border color, sidebar width, agent toolbar height. It detects color-scheme preference and Electron context. It checks whether localStorage.ApplicationStore exists, and if not, adds a logged-out class that switches the shell to the auth layout. By the time the first JavaScript bundle comes from the network the loading screen is already correctly themed, sized, and positioned for whether the user is logged in.

Render first, authenticate second

Authentication is another step where most apps give up their performance budget. The conventional flow: fetch the HTML, load the bundle, validate the session, fetch the user, fetch the workspace, then render. One to three seconds before the user sees anything.

Linear treats auth the same way it treats mutations. Assume the happy path, verify in the background. The interesting part is what they use as the "is this user logged in" signal.

Most apps reach for a JS-readable cookie that runs alongside the real HttpOnly session token, just so the bundle can branch on it without touching anything sensitive. Linear does something simpler. The inline boot script checks whether localStorage.ApplicationStore exists:

if (localStorage.getItem("ApplicationStore") === null) {

document.documentElement.classList.add("logged-out");

}If it's there, the user has used Linear in this browser before, which means their workspace is already sitting in IndexedDB. This goes back to the first section we covered where they inverted the server: the database lives in the browser. If it's missing, there's nothing to render anyway, so the shell flips to its logged-out layout and the login flow takes over.

The signal isn't "do you have a valid session." It's "do we have anything to show you." That's a stronger predicate, because it's exactly what the next render depends on. The actual session token sits in a cookie. The bundle never tries to be smart about it. It just renders what it has and lets the next request (the WebSocket handshake, a sync delta, any HTTP call) be the thing that fails with a 401 if the session has gone stale. When that happens, the client redirects to login. It almost never fires, because the cookie was set by the same server that's about to verify it.

The whole pattern is consistent with the rest of the architecture: the client trusts what's local, the server is the source of truth for correctness, and the two reconcile asynchronously. Just like a mutation. Just like a sync delta.

This is maybe one of my favorite details about Linear that I wish more CSR apps implemented. For authentication, follow the signal, assume happy path, and fallback if not.

The sync engine

Most of what makes Linear fast lives downstream of one decision: the server is a sync target, not a source of truth for the UI. The internals have been thoroughly reverse-engineered already, and Tuomas has given two excellent talks on the architecture. I'm not going to retrace them. What I want to do is name the three pillars that actually produce the speed, because the speed is a property of how they fit together, not of any single one.

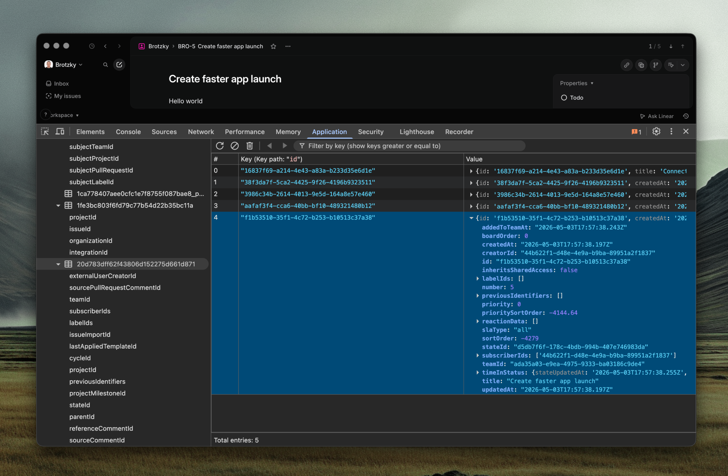

1. The data is already there, and only what you need is

When the bundle boots, it doesn't fetch the workspace. It hydrates from IndexedDB into an in-memory MobX object pool, and every query from the UI goes to the pool first. There's no "loading issues" state because the issues are already on the user's machine.

The piece most write-ups skip is what isn't eagerly hydrated. Linear loads roughly 58 model types out of around 80 on bootstrap, the workspace skeleton. The two heaviest tables, Issue and Comment, lazy-hydrate on demand. After they load once, a partial index records exactly which slice was fetched, so the next read for the same slice never hits the network. This is data-level code splitting, and it's what lets the engine scale: startup cost tracks the workspace structure, not the workspace size. A 10,000-issue workspace boots about as fast as a 100-issue one.

This is the first spinner-killer. Click into a project, the issues are there. Filter by assignee, the index is already built. There's nothing to fetch because there's nothing missing.

2. Mutations don't wait for the network

When you change an issue's status, three things happen in the same tick: the MobX observable updates so the UI reflects the change, the mutation is written to a durable transaction queue in IndexedDB so it survives a refresh, and it's queued for the server. The network hasn't been touched yet.

The user never waits to see their own change. The retry, the rollback, the durability across reloads, all background. If the server rejects, the observable reverts and there's a brief flicker, but in practice that almost never happens because most invalid mutations are caught before the transaction is even created.

This is the second spinner-killer. As I keep saying: the conventional flow ends with the server's response. Linear's flow starts with the local mutation and treats the server as a confirmation step, not a permission step.

3. One delta, one cell

When the server confirms a mutation (yours or someone else's), the change comes back as a small JSON envelope describing what moved. The client applies it by writing to the corresponding MobX observable.

Because every property on every model is its own observable, and every component that reads one is wrapped in observer(), MobX knows exactly which components depend on which fields. A delta that updates one field of one issue re-renders exactly the components that read that field. Not the parent list, not the sidebar, one cell. A 50-issue update is 50 cell re-renders, not a list re-render. This is what lets a busy workspace stay smooth when ten people are editing things at once: the cost of receiving updates scales with what changed, not with what's on screen.

Why the three fit together

Take any one away and the illusion breaks. A local database without optimistic writes still spins on save. Optimistic writes without granular observables still jank on every delta. Granular observables without a local database still wait on initial load. React Query gives you cached reads. SWR gives you stale-while-revalidate. Redux Toolkit gives you optimistic updates. None of them get you to where Linear is, because the speed isn't a property of any single layer. It's a property of the system.

This is also the chapter that earns the previous two. The bundler and the loader shell aren't the reason Linear feels fast. The sync engine is. What the bundler and loader buy you is not wasting the budget the sync engine earns.

Designed for speed

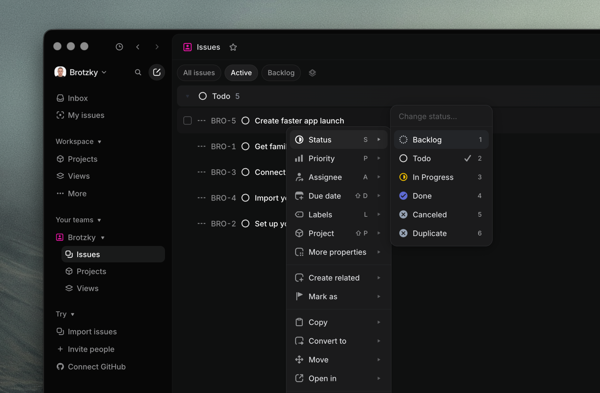

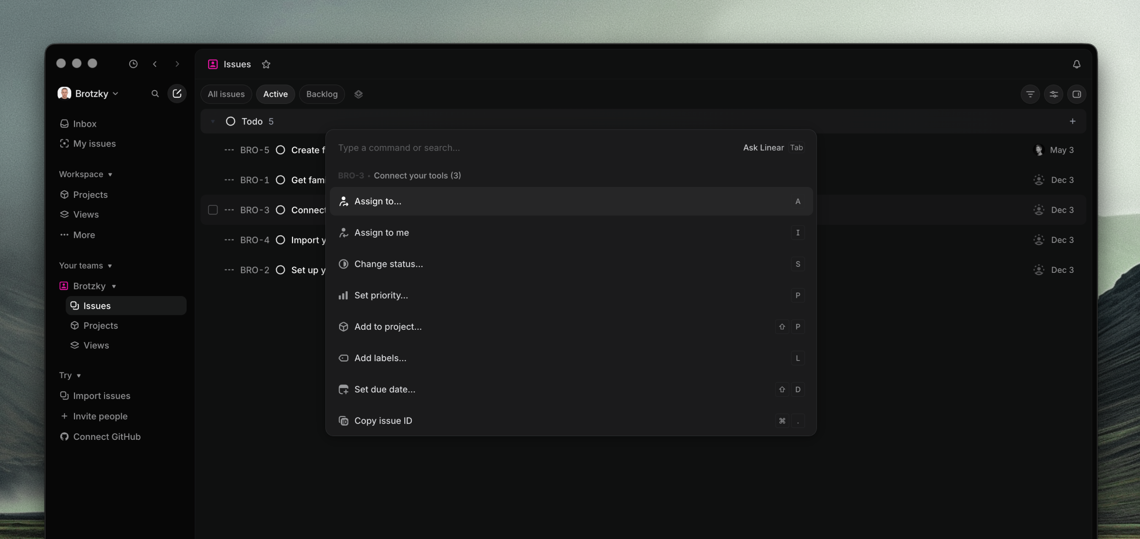

Speed isn't only an engineering property. It's also a design property. A perfectly-tuned sync engine still loses to a slow input model: if the fastest path to an action requires a mouse, three menus, and a click, the user pays for those steps regardless of how fast the underlying mutation runs.

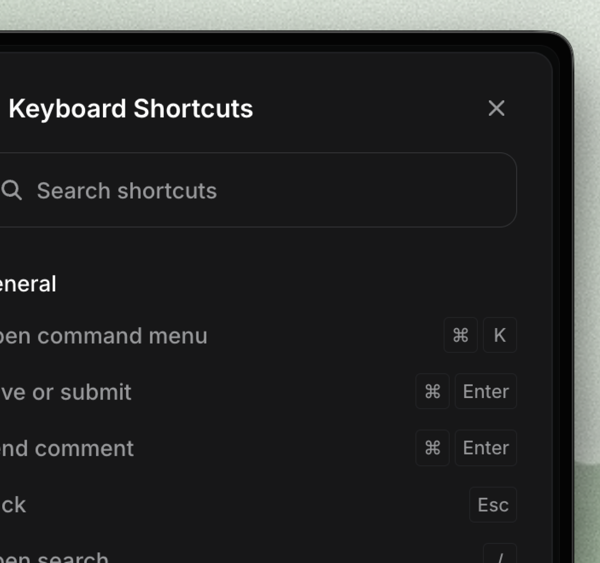

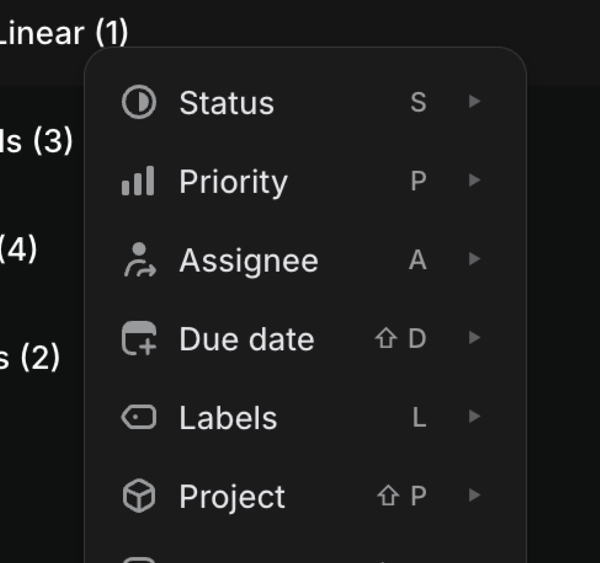

Linear is built around the assumption that anyone using it for more than a few hours a week wants to keep their hands on the keyboard. Every common action has a shortcut. The command palette is one keystroke away. The right-click menu is custom-built. None of these are accidents. They're the natural shape of a tool you use all day.Each technique alone would be fine. Pasted on top of an otherwise traditional app, it would buy a few percent. None of them, in isolation, would let you ship Linear.

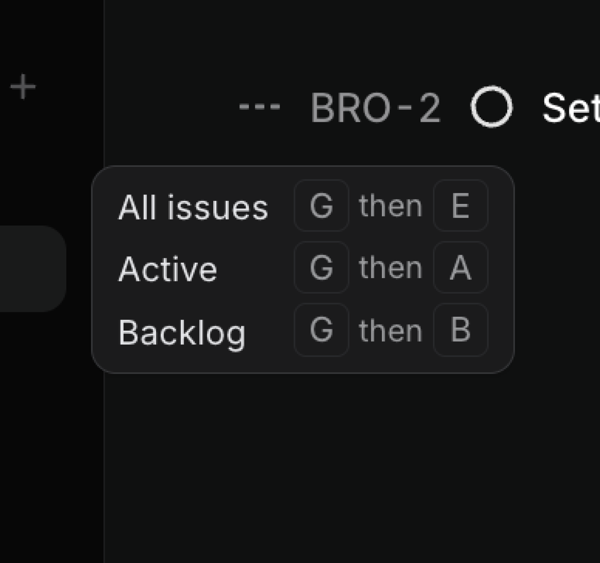

Every action has a shortcut

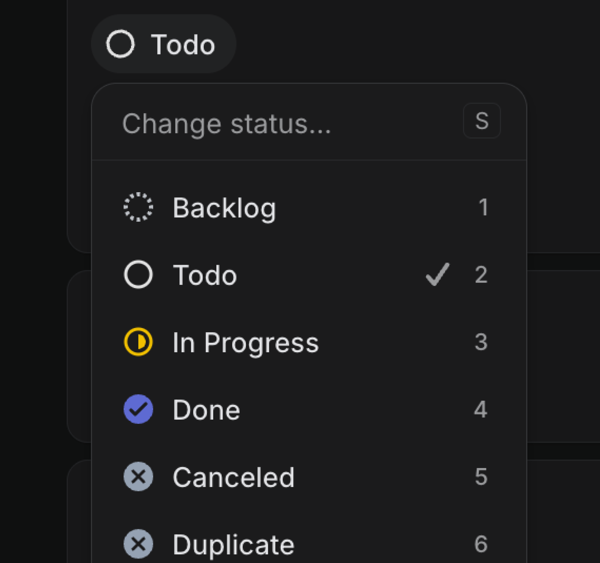

Single letters edit the focused issue. Two-letter chords navigate. Cmd modifiers act globally.

There is no frequent action in Linear that requires a mouse, and yet the mouse still works for everything. The keyboard isn't a power-user dialect bolted onto a mouse-first app. It's the fast path through an app that supports both, and the slow paths exist for users who haven't invested in learning the shortcuts yet. The two coexist on purpose: the app is approachable for new users, and gets faster the more you use it.

The ergonomics matter too. Single-letter edits are the lowest-friction shortcuts the browser provides: no modifier, no hover delay, no click target, no Fitts's law penalty. Changing an issue's status goes from "find pill, click pill, find option, click option" to "press S, type two letters, enter."

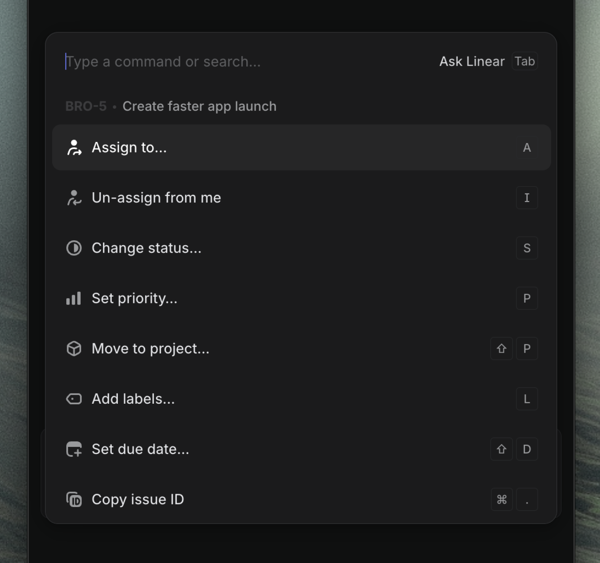

The command palette is always one keystroke away

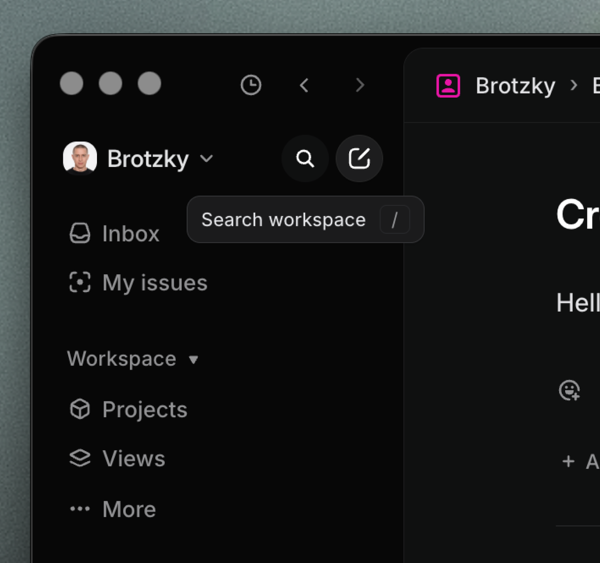

cmd k opens a command palette that lets users search over everything Linear knows about. Issues, projects, labels, status changes, navigation, issue creation, settings, theme toggles. Results appear before you finish the second letter, because the palette is searching the local MobX object pool, not a server.

The architectural payoff is that the entire app becomes a search box. Navigation is search. Issue creation is search. Status changes are search scoped to statuses. The popover that opens when you click an issue's status pill is the same component as Cmd-K, parameterized by what it's editing. One primitive, used everywhere, running on data that's already in memory.

A fast app needs both incredible engineering and design. You can build a perfect sync engine and a flawless rendering pipeline, and still ship something that feels slow if the design pushes the user through three menus and a modal to do anything. Engineering speed makes a single interaction fast. Design speed makes the path to each interaction short. The two go hand in hand, and Linear treats them that way.

For a tool used eight hours a day, the difference between a 200ms shortcut and a two-second mouse path compounds into hours a year. The sync engine and the animation budget produce a fast app on their own. But that app would still feel slow, because the user would spend more time deciding how to do the thing than doing it. Keyboard-first design isn't speed for its own sake. It's making sure the work the engineering layers earned doesn't get spent on the user's input model.

The animation layer

All the work in the previous chapters can still be undone by one bad animation. If a button takes 300ms to visually respond, the user feels the lag regardless of how fast the underlying mutation was. The animation layer has to honor the speed budget the rest of the system earned.

Animate only what doesn't trigger layout

Browsers have three tiers of property changes, and the cost scales with how high each one is on the rendering pipeline. Composited properties (transform, opacity) hand the work to the GPU and run independent of the main thread. Paint-triggering properties (color, background-color, border-color, fill) skip layout but still redraw pixels. Layout-triggering properties (width, height, top, left, margin, padding) force the browser to recompute the position of every subsequent element on the page. Never animate those.

/* What Linear does */

.row:hover {

background-color: var(--color-bg-hover);

transition: background-color 0.12s;

}

.icon-arrow {

transform: translateX(0);

transition: transform 0.15s;

}

/* What you'd write if you didn't know better */

.row:hover {

margin-left: 2px; /* triggers layout for every row beneath */

transition: all 0.2s; /* and now you're animating margin */

}The margin-left version recomputes the layout of every row beneath the hovered one, on every frame, for the full 200ms of the transition. On a long issue list that's the difference between buttery and jank.

Know when to hold back

It's easy to get carried away with animations. But in a tool used every day, the animations you'd love on a marketing site start to get in the way. Even a small hover delay, in the wrong place, becomes the thing the user notices.

Linear nails most of this. The command palette is the one I'd argue is too slow, but I've gotten stubborn over the years. The reason the rest works is that animations reference their origin. The status popover scales out of the status pill. The agent panel slides in from its toggle. The motion is doing spatial work, telling the user where the new element came from, rather than fading in from nowhere as decoration.

Keep durations short and snappy

/* variables form Linear's stylesheet */

--speed-highlightFadeIn: 0s;

--speed-highlightFadeOut: .15s;

--speed-quickTransition: .1s;

--speed-regularTransition: .25s;

--speed-slowTransition: .35s;Most design systems default longer. Material's standard duration is 200ms, iOS's spring closer to 350ms. Defaulting to shorter transitions is one of the easiest ways to make an app feel faster, and Linear's defaults sit well below the industry norm.

Linear takes this one step further with asymmetric timing on enter and exit. Hover highlights, popovers, and the agent panel appear instantly when you summon them, then fade out over 150ms when you dismiss them.

Conclusion

There are so many more details I could cover that make Linear feel fast. The reality is there's no single thing that makes an app performant. It's the culmination of hundreds of decisions.

What I love about Linear's approach is how unconventional it is. No Next, no Tanstack, no fancy framework. They decided early on what architecture would serve their users best, and they've stayed true to it. The result is a client-side rendered app that's faster than server-rendered ones, and a stack simple enough that the whole architecture fits in your head.

The shape of it is roughly this. The server is a sync target rather than a source of truth. The database lives in the browser. Mutations apply locally first and reconcile in the background. The first load ships less code in more pieces, with a service worker precaching the rest while the user is still on the login page. Auth renders before it verifies. The sync engine hydrates from IndexedDB into per-property MobX observables, so a 50-issue update is 50 cell re-renders rather than a list re-render. Animations stay on the GPU, durations sit below the 100ms cause-and-effect threshold, and layout-triggering properties are never animated. The input model is keyboard-first. Every common action has a shortcut, Cmd-K is a fuzzy search over the local pool.

The hard part isn't the implementation. It's the dedication to the craft over years, as the codebase matures, expands, and pushes up against new constraints. Respect!