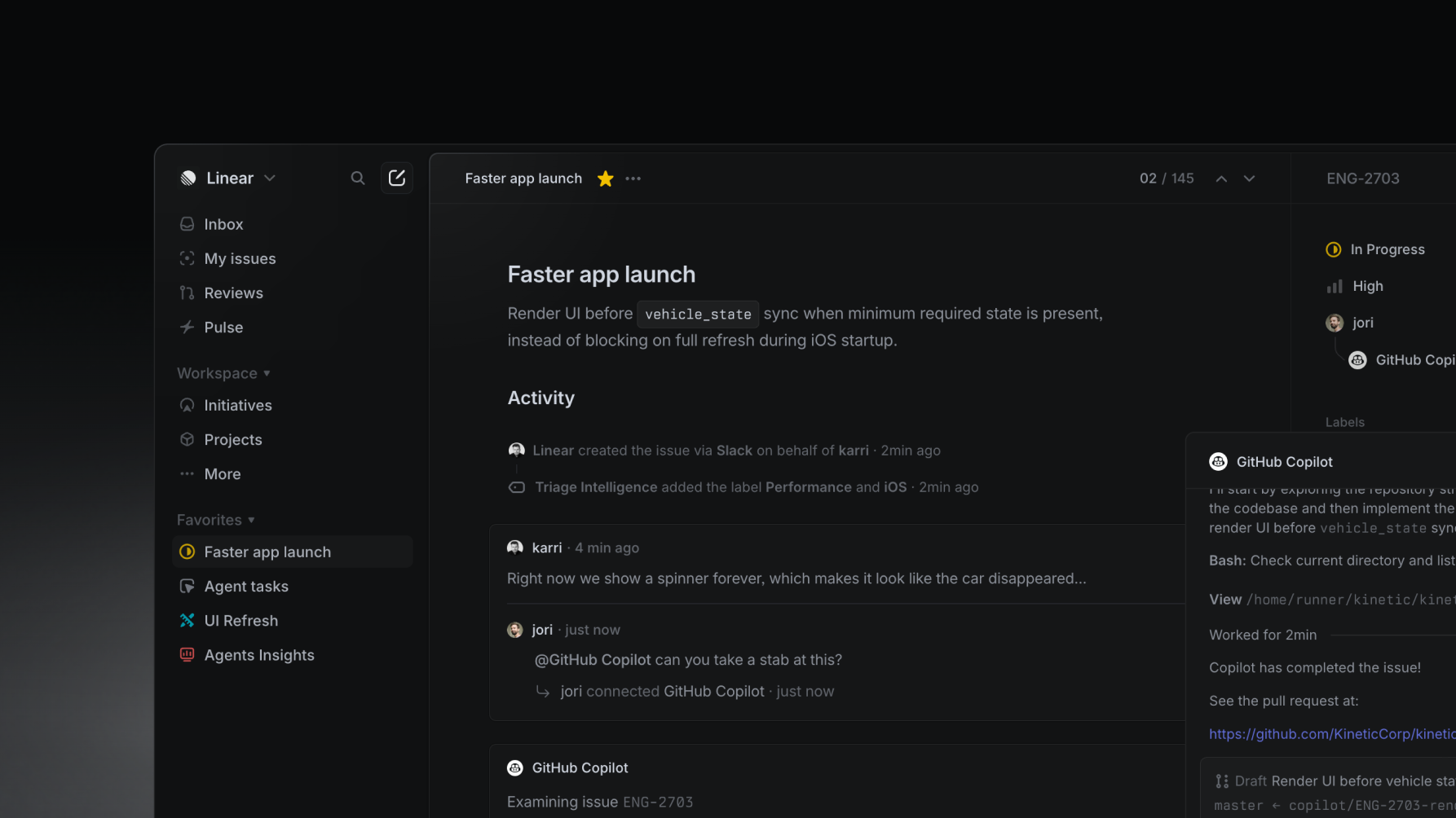

Why Linear feels so fast: a technical breakdown

Six milliseconds. That's roughly how long Linear takes to update an issue's status — click to repaint — on a typical workspace. A traditional CRUD app doing the same thing burns about three hundred. The fifty-fold gap isn't one big trick; it's about thirty stacked decisions, no single one of them dramatic, layered across the bundler, the database, the runtime, the fonts, the cookies, and the way the app refuses to wait for the network on principle.

It is also built on the most boring stack in modern web development: React, TypeScript, MobX, Postgres, a CDN. No edge database, no React Server Components, no Bun. Every piece is at least five years old. The application that comes out the other end is, for many engineers, the fastest web app they've ever used. Either the boring stack is misunderstood, or the gap between technology and experience lives somewhere the typical postmortem never looks.

Chapter 1: Inverting the server

Most web apps live inside the same loop. The user clicks. The browser fires an HTTP request. A server queries a database, formats a response, sends it back. The browser repaints. The end result is a spinner, a skeleton, or a frozen UI for a few hundred milliseconds while the app waits on the network.

Linear inverts the relationship. The server is no longer the source of truth — it's a synchronization target. The actual database the UI reads from is in the browser, in IndexedDB. Mutations apply locally first, then asynchronously push to the server, which broadcasts deltas back to other clients via WebSocket. Every UI interaction reduces, structurally, to two lines:

// A traditional web app updating the server

async function updateIssue({ issue }) {

showSpinner();

const response = await fetch(`/api/issues/${issue.id}`, {

method: "PATCH",

body: JSON.stringify({ title: issue.title }),

});

const updated = await response.json();

setIssue(updated)

hideSpinner();

}

// vs Linear

issue.title = "Faster app launch";

issue.save();The first line mutates an in-memory MobX observable — a microsecond write. The second queues a transaction that the engine batches and flushes to the server on the next microtask. The UI re-renders synchronously off the local mutation, before the network has done anything at all. There are no spinners because there is nothing to wait for.

"Literally the first lines of code that I wrote was the sync engine, which is very uncommon to what you usually do when you're a startup." — Tuomas Artman, Local-First Conf 2024

A peek into Linear's stack

From their public posts and engineering talks:

Frontend

React 18+

MobX (observable graph, granular re-renders)

TypeScript

Rolldown-Vite + plugin-react-oxc(mid-2025; previously Rollup; previously Parcel)

ProseMirror (rich text editor, high-confidence inference)

Radix UI primitives (popovers, menus, focus traps)

Emotion (CSS-in-JS via @emotion)

Comlink (Worker RPC)

Inter Variable + Berkeley Mono (single woff2 each, font-display: swap)

Backend

Node.js + TypeScript (single language for all server code)

PostgreSQL on Cloud SQL (issues table partitioned 300 ways)

Memorystore Redis (event bus + cache + sync cursors)

pgvector (similar-issue detection, OpenAI ada embeddings)

Kubernetes on GCP (one workload per concern)

Cloudflare Workers (multi-region edge proxy)

Other clients

Desktop: Electron (same web JS, native chrome)

Mobile: Swift (iOS) + Kotlin (a separate full reimplementation)

Marketing

Nextjs (static)

styled-components

Inline svg spriteTwo things stand out. The stack is conspicuously simple and leans into proven technology — no edge databases, no React Server Components, no Bun. The interesting exception: Rust is in production, but quarantined. Linear ships Difftastic, Wilfred Hughes's tree-sitter–backed syntactic differ, compiled to a 1.2 MB WebAssembly module and loaded by a Web Worker only when the user opens a PR diff. A second worker runs TensorFlow.js for client-side code-language detection on un-tagged snippets. Neither touches the main thread, neither blocks the boot path. That is the more sophisticated version of the "boring stack" claim: it isn't that Linear refuses interesting tools, it's that the interesting tools are hidden where they earn their weight. The other thing that stands out: the mobile clients are written twice, fully native, instead of reusing the web sync engine in a webview. That is the most expensive line on the chart, and it tells you everything about how this team thinks about latency.

Chapter 2: Making the first load feel instant

The bundler arc: Parcel, Rollup, Vite, Rolldown

Linear has rewritten its build pipeline four times. Each rewrite cut something measurable. In March 2021 they migrated from Parcel to Rollup; the changelog spells out the gains:

50% less code shipped,

30% smaller after compression.

Cold-cache page loads got 10 to 30% faster.

Time-to-first-paint of the active-issues view dropped 59% on Safari and 40 to 50% elsewhere (Linear's metric is startup-to-paint, not component render time).

Memory usage dropped 70 to 80%

Most of that came from a combination of decisions Linear's changelog calls out together: targeting only modern browsers, better dead-code elimination, and aggressive code splitting. Dropping legacy support is the headline (no polyfills, no ES5 transpilation, no nomodule fallback) but the dead-code and chunking work matters just as much.

Vite 8's release notes cite Linear's production CI build dropping from 46 seconds to 6 seconds after the move to Rolldown-Vite. Separately, Linear engineers reported the same production build (across roughly eleven thousand modules) accelerating again after swapping plugin-react-swc for plugin-react-oxc, once VoidZero added native styled-components support to oxc. The combined bundle ships about 21 MB of minified JavaScript and 40 MB of WebAssembly — a real footprint, but one that's deliberately partitioned across hundreds of route-level chunks the user only fetches on demand.

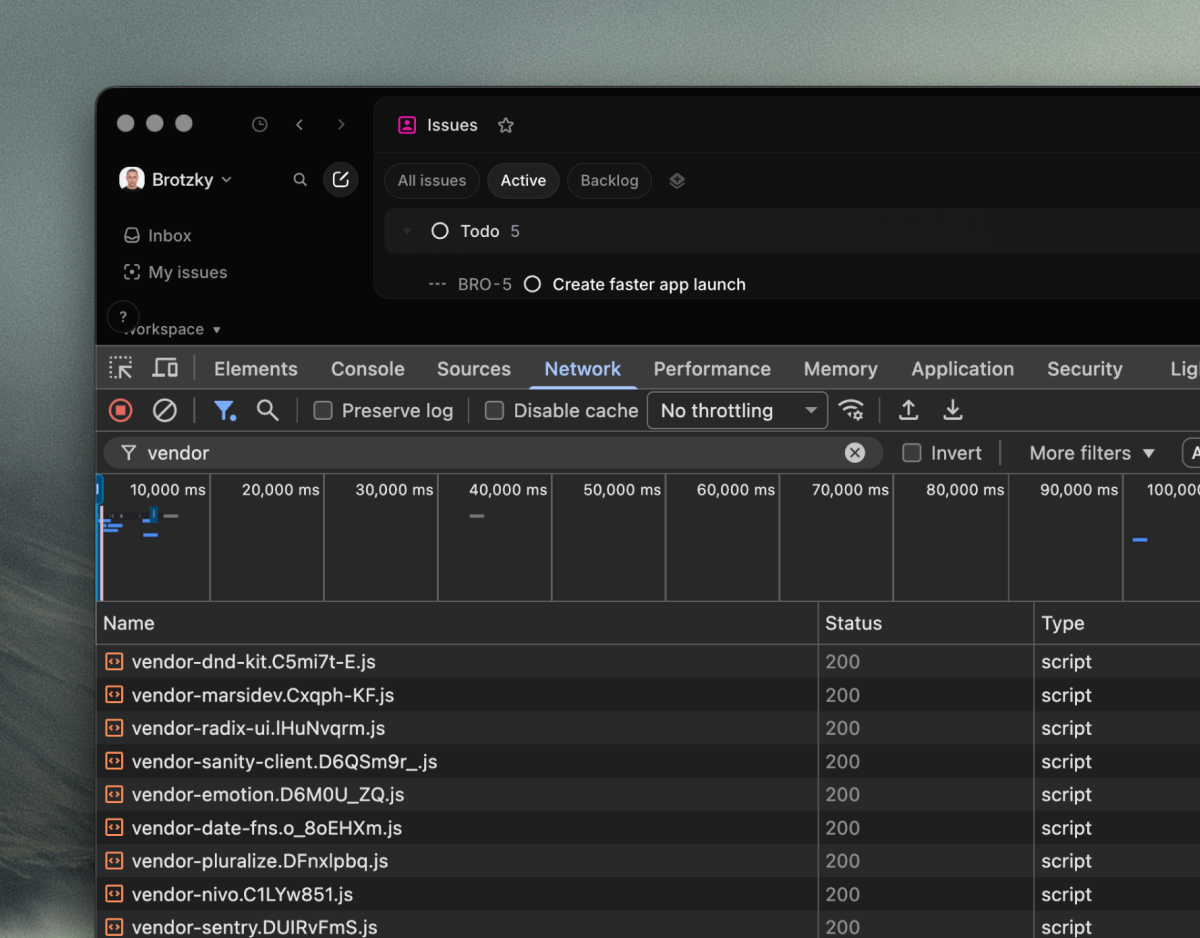

The chunk graph implies the build config:

// vite.config.ts (reconstruction; matches observed chunk graph)

export default defineConfig({

plugins: [react()],

build: {

target: "esnext", // no legacy syntax, no polyfills

cssMinify: "lightningcss",

modulePreload: { polyfill: false },

rollupOptions: {

output: {

// One chunk per npm package > ~3 KB. Cache invalidation

// becomes per-library instead of per-app-revision.

manualChunks(id) {

if (id.includes("node_modules")) {

const pkg = id.match(/node_modules\/([^/]+)/)?.[1];

if (pkg) return `vendor-${pkg}`;

}

},

},

},

},

});The lesson isn't which bundler to pick. The sequence is the lesson: drop legacy browsers, go native ESM, pick a modern bundler, move to a Rust toolchain. Each step is small. Stacked, they cut Linear's first-load JavaScript roughly in half and their build time by an order of magnitude.

Bundle composition

Every npm package gets its own chunk and its own hash, cached independently. A traditional vendor.js invalidates the entire dependency graph on any bump. Linear's chunking turns vendor caching from binary to fine-grained: the live login HTML preloads 32 distinct vendor-* modules — MobX, Radix UI, Comlink, idb, ProseMirror, y-prosemirror, Emotion, Lodash, date-fns, Sentry, etc. — each with its own content hash. Bumping a single dependency invalidates one chunk; the rest stay cached.

The service worker is more substantial than its first impression. The linear-sw-v16 cache is filled lazily by a Workbox-style precache manifest baked into the SW source — 1,218 hashed assets, mostly route-level JS chunks with the rest icons and fonts. The page tells the SW which assets to fetch via a cacheAssets message, the SW pulls them in batches of five, and content-hashed URLs are skipped if they're already cached (the hash means the response can never have changed). When a new manifest arrives, the SW diffs against the live cache and deletes orphaned entries. The fetch handler bypasses dynamic paths entirely — /graphql, /sync/, /auth/* — leaving the data layer to the sync engine and the chunk layer to the HTTP cache. The service worker also handles Web Push: a push handler shows OS-level notifications, and a notificationclick handler routes the click back to the linked issue. That's how Linear users get notified when the app is closed.

Fonts

Font loading is one of those details a lot of apps get wrong. The failure modes are visible: invisible text for half a second, layout shifts as the real font swaps in, double-fetched resources because the preload didn't match. Linear's setup avoids all three:

<!-- in <head> of index.html -->

<link rel="preload"

href="https://static.linear.app/fonts/InterVariable.woff2?v=4.1"

as="font" type="font/woff2" crossorigin="anonymous">

<link rel="preconnect" href="https://static.linear.app" crossorigin>@font-face {

font-family: "Inter Variable";

font-weight: 100 900;

font-display: swap;

src: url(https://static.linear.app/fonts/InterVariable.woff2?v=4.1)

format("woff2");

}

/* Italic and Berkeley Mono follow the same shape, single woff2 each. */Variable fonts cover the full 100–900 weight axis in a single woff2, eliminating per-weight requests. font-display: swap renders the fallback stack immediately and swaps to Inter when it loads — FOUT instead of FOIT. The trick that's easy to miss: crossorigin="anonymous" on the preload tag. Without it, the browser preloads the font, then fetches it again when CSS later references it, because the two requests have different CORS modes. crossorigin on the preload makes the browser reuse the cached one. Inter Variable ships as a single woff2 of a few hundred kilobytes with a cache TTL on the order of months. Pay once, almost never again.

The loader shell

Inlined in <head> is just enough CSS to paint the loading state with no external stylesheet fetched:

<!-- abridged from linear.app/login head -->

<style>

:root {

--color-bg-level-0: #ffffff;

--color-line-primary: #e5e5e5;

--color-text-primary: #0f0f10;

/* token names are illustrative; Linear's real ones are

--bg-sidebar-light/dark, --bg-base-color-light/dark,

--bg-border-color-light/dark, --content-color-light/dark,

--sidebar-width, --agent-toolbar-height */

}

html { font-family: "Inter Variable", Arial, Helvetica, sans-serif;

text-rendering: optimizeLegibility;

-webkit-font-smoothing: antialiased; }

#logo { transform: translateZ(0); } /* GPU layer */

@keyframes logoBackgroundPulse { /* subtle ambient pulse */ }

@keyframes fadeIn { from { opacity: 0 } to { opacity: 1 } }

</style>

<script>performance.mark("appStart");</script>transform: translateZ(0) forces the logo onto its own GPU layer, so the ambient pulse animates without triggering layout or paint on the rest of the page. The fallback font stack — Arial and Helvetica — is chosen for metrics close to Inter, so the layout shift on swap is minimal in practice. (Full elimination requires size-adjust / ascent-override / descent-override; Linear leans on the natural similarity instead.) Most apps ship font-display: swap and stop there, without realizing the fallback's metrics are now responsible for the CLS jolt when the real font arrives.

The inline boot script does more than mark the start. Before any bundle has loaded, it reads localStorage.splashScreenConfig, merges with any session override, and applies the user's theme tokens — sidebar background, base color, border, sidebar width — directly to document.documentElement.style. It detects color-scheme preference and Electron context. By the time the first JavaScript bundle parses, the loading screen is already correctly themed and sized. This is the same trick as the auth flow, applied to a different problem: don't wait for the bundle to know whether you're a dark-mode user. Read it from localStorage on first paint, before a single byte of application JS executes.

Render first, authenticate second

Authentication is where most apps give up their performance budget. The conventional flow: redirect, validate, fetch session, fetch user, fetch workspace, then render. One to three seconds before the user sees anything.

Linear treats auth the same way it treats mutations. Assume the happy path, verify in the background.

Inspecting the browser's cookie store on a logged-in session shows two cookies in play (the names below match what's currently observable; the JWT payload is the decoded `session` cookie):

// The two cookies set to track auth

session HttpOnly, Secure, SameSite=Lax

JWT signed HS256

Decoded payload:

{

"v": 2,

"sessionId": "a88d252b-d500-41ae-adaa-5fe294e6d1b6",

"userAccountId": "a0420f2b-35ec-47c3-ad1e-21b79bde468c",

"createdAt": "2026-05-05T01:06:07.532Z",

"iat": 1777943167

}

loggedIn Not HttpOnly, readable from document.cookie

Value: 1

A flag. Tells the JS bundle a session exists.The real session token is HttpOnly, so JavaScript can't read it. But that also means the bundle can't tell whether the user is logged in. So Linear writes loggedIn=1 alongside it, readable from JS, carrying no security value on its own.

When the bundle boots, it reads loggedIn, finds 1, and assumes the user is authenticated. IndexedDB opens, the local store hydrates, the UI paints. By the time the server finishes verifying the JWT, the user is already looking at their issues. If the JWT is rejected, the app redirects to login — it almost never is, because the cookie was set by the same server that's about to verify it.

Most apps wait for the server to confirm who you are. Linear assumes, renders, and reconciles — and the WebSocket itself gets a Cloudflare optimization for free: when the Worker forwards the connection through unmodified, the runtime hands it off to a faster code path that skips re-processing the response back through the V8 isolate.

Chapter 3 — The sync engine

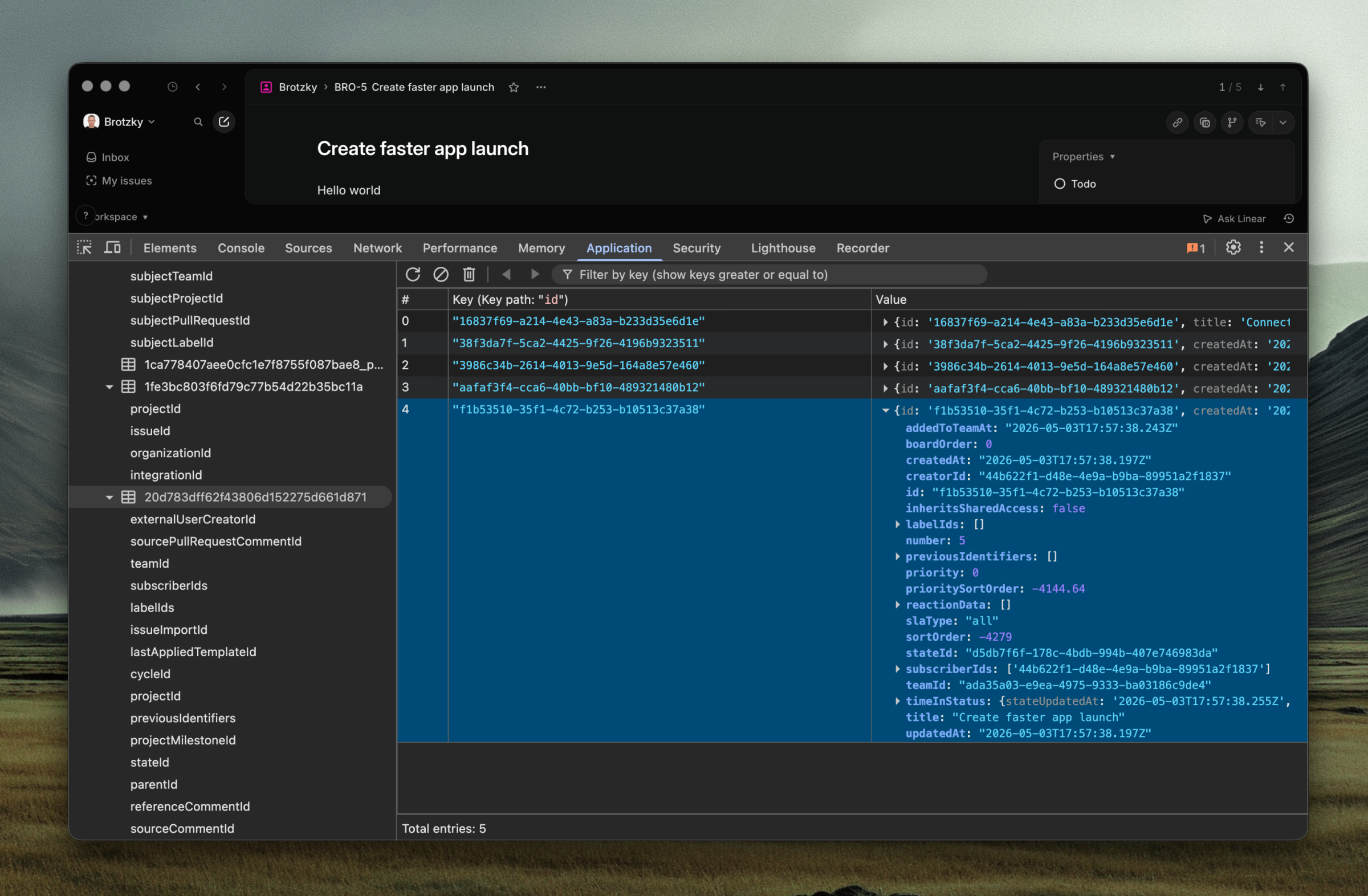

The local pool is the source of truth (for the UI)

The in-memory MobX object pool is what the UI reads; IndexedDB is the durable cache underneath. The pool is hydrated from IDB on first access and stays in sync via the same SyncAction stream. On bootstrap, the workspace skeleton streams in: 58 model types eagerly hydrated out of roughly 80 in the data graph. The two heaviest tables — Issue and Comment — are excluded from the eager load and lazy-hydrate on demand. Once a record is in the pool, every query goes there first.

There are five load strategies in the schema (instant, lazy, partial, explicitlyRequested, local), one of which earns its own mention. The local strategy is how Linear engineers prototype features with no backend. As Tuomas put it on the Local-First Conf 2024 stage: "You can say I've got a new model, I don't want it to be sent to the back end because there is no back end that exists for this yet — but you probably still want to save those changes locally so everything else will work perfectly." The sync engine writes local-strategy models to IndexedDB and treats them as durable; the rest of the application has no idea they aren't real.

This is what kills the spinner. Click into a project — the issues are already there. Filter by assignee — the index is already built. There's nothing to fetch because there's nothing missing.

Mutations don't wait for the network

When you update an issue, three things happen in the same tick: the MobX observable updates immediately so the UI reflects the change, the mutation is written to a __transactions object store in IndexedDB so it survives a refresh, and it's queued for the server. Crucially, the local database row is not modified until the server's delta arrives. The UI shows the new value because MobX has it in memory, but the on-disk truth waits for confirmation. If the server rejects, the in-memory observable reverts and the user sees a brief flicker. If it accepts, the delta updates the local DB and everything stays consistent.

The user never waits to see their own change. Retry, rollback, durability across refreshes — all background.

The four-queue transaction lifecycle

When you issue.save(), the mutation enters a four-stage pipeline. Created — fresh, just constructed, in a createdTransactions array. Queued — written to a __transactions object store in IndexedDB so it survives a refresh, awaiting batch dispatch. Executing — sent to the server over GraphQL, awaiting a response. Completed-but-unsynced — the server accepted the mutation, but the corresponding lastSyncId hasn't yet arrived as a delta packet. The local IndexedDB row is not updated until that delta arrives — which is what makes rollback on rejection trivial. The on-disk truth never moved.

A microtask scheduler (commitCreatedTransactions) buckets every transaction created in the same event-loop tick under one batchIndex. Mutating five fields of an issue inside one event handler is one HTTP request — the GraphQL document ships them as aliased operations: mutation { o1: issueUpdate(...) { lastSyncId }, o2: documentContentCreate(...) { lastSyncId } }. The mutation only ever requests lastSyncId back; nothing else.

There are six concrete transaction types in the bundle. Five carry server actions: CreationTransaction, UpdateTransaction, DeletionTransaction, ArchivalTransaction, and UnarchiveTransaction. The sixth, LocalTransaction, is a client-only wrapper used by models with the local load strategy — it makes a model behave like it's saving without actually shipping anything to the server. Of the five server-actionable types, only CreationTransaction is fully idempotent — the client mints the UUID before the network sees the request. DeletionTransaction is not: a mutation sent but never acked (a tab crash mid-flight) gets replayed on next boot, and the second attempt may hit a model the first attempt already deleted. Linear accepts the rare "can't delete a model that doesn't exist" error as the price of durable, replayable mutations.

How issue.title = "Faster app launch" actually works

Linear's models are TypeScript classes whose properties are decorated at definition time. The decorators (@ClientModel, @Property, @Reference) install getters and setters via Object.defineProperty. The first time a property is read or written, the setter populates a hidden per-instance backing slot (recently renamed from __mobx to __data) with a MobX box and routes future access through it. Until then the field stays cold — no observers, no tracking overhead. Collections take this further: ReadonlyCollection has its own makeObservable() method that fires on first read of .elements. A workspace with hundreds of collections only enters MobX tracking for the ones the UI actually touches. That's why issue.title = "Faster app launch" is a microsecond mutation: the setter resolves to a single box write.

One delta, one cell

Every server-confirmed change in Linear arrives at the client as a SyncAction over WebSocket: a small JSON object with an action code (I / U / D / A — insert, update, delete, archive), a modelId, and the changed data. Outbound mutations from the client go the other direction over GraphQL, packaged as Transaction objects (UpdateTransaction, CreationTransaction, DeletionTransaction, etc.); the server applies them, increments lastSyncId, and the resulting delta comes back as a SyncAction. The client applies it by writing to the corresponding MobX observable.

There are eight action codes total, not the four most write-ups list. I, U, D, A cover insert, update, delete, archive. V is unarchive. C (covering) is undocumented externally. The two interesting cases are G and S — sync-group transitions. When a permission changes mid-session, the server sends a single G or S action. Joining a team causes a synchronous partial bootstrap on the new sync group's UUID before the client processes the next packet; leaving causes the local IndexedDB to delete every model tagged to it. The local pool stays structurally a subset of what you're authorized to see, never more.

Every property on every model is its own observable, and every React component that reads one is wrapped in observer(). A delta that updates one field of one issue re-renders exactly the components that read that field — not the parent list, not the sidebar, just the cell. A 50-issue update is 50 cell re-renders, not a list re-render. The cells still pay React's per-component reconciliation cost; the win is what doesn't render.

lastSyncId: the resync primitive

Every WebSocket frame the server sends carries a lastSyncId, a monotonically-increasing counter that is database-global — a single number space across every workspace on Linear's backend. Sync groups (the UUIDs corresponding to your user, team memberships, and roles) gate which deltas the server sends you, but the counter itself is shared. The client persists its high-water mark in IndexedDB. On reconnect — whether after a five-second drop or an eight-hour offline session — the client's first message is essentially "I'm at sync N, give me everything after." The server replies with the delta stream from N+1 to current.

This is the durability primitive. Local mutations in the pending-transactions table replay on next boot. Remote mutations missed during an offline window stream in via the same delta cursor. Going offline and coming back is the same code path as receiving a delta in real time — just with more deltas at once.

Reconnect mechanics

Reconnect uses quadratic backoff capped at 30 seconds:

reconnectBackoffCount = Math.min(reconnectBackoffCount + 1, 12);

delay = Math.min(30_000, (200 + Math.random() * 100) * reconnectBackoffCount ** 2);Attempt 1 is ~200-300ms; attempt 12 caps at 30s. The clever piece is the recovery: each successful pong runs Math.max(reconnectBackoffCount - 2, 0) — two healthy pings reset the backoff completely. There's also a disconnectedDueToUserIdle state: an IdleNotifier listens for mousemove, keydown, visibilitychange, touchstart, and actively closes the WebSocket on idle to cut server-side fan-out. Tabs left open for hours don't keep their connections alive; the connection reopens on the next interaction.

The protocol vocabulary is small. Three commands the client sends — hshk (handshake, on connect), ping (keep-alive), presence (throttled lastActiveAt). Six the server sends — sync (the delta stream), pong, streamData (bootstrap data over the socket), ephemeralMap and ephemeralPrimitive (out-of-band data, never persisted), and push (Electron-only — relays to the OS notification system). One full-duplex connection, one tiny vocabulary, every other capability multiplexed on top.

What's actually inside IndexedDB

Open DevTools → Application → IndexedDB on a logged-in Linear tab and you'll see two databases. linear_databases is the outer registry — one row per workspace you've signed into, supporting multi-account without leaking state across tenants. Each linear_<hash> is a per-workspace database; the <hash> is computed from the schema and the user identity. Inside, every model is its own object store named by hash (so the Issue table is something like 119b2a..., not Issue). Two specials live alongside: _meta holds lastSyncId, subscribedSyncGroups, backendDatabaseVersion, and per-model persisted flags that drive lazy hydration; __transactions holds the durable pending-mutation queue. Schema migrations are automatic — a __schemaHash computed from every model's name + property metadata + schema version bumps the IndexedDB version on each release, and the upgrade callback runs the migration. There are no hand-written migration scripts; the schema is the migration.

Why the three pieces work together

Local-first reads are free. Optimistic writes feel free. Granular observables make re-renders free. Take any one away and the illusion breaks. A local DB without optimistic writes still spins on save. Optimistic writes without granular observables still jank on every delta. Granular observables without a local database still wait on initial load. React Query gives you cached reads. SWR gives you stale-while-revalidate. Redux Toolkit gives you optimistic updates. None of them gets you here, because the speed isn't a property of any single layer. It's a property of the system.

Two conflict-resolution strategies, one application

Linear runs two distinct conflict-resolution strategies side by side, chosen per data shape rather than per religion. Structured data (status changes, assignees, dates, scalars on every model) goes through the central server, which orders mutations and applies last-writer-wins per field. Unstructured prose — issue descriptions, document bodies, inline comments — runs on Yjs, which is a CRDT-based collaborative-editing library. The bundle ships vendor-y-prosemirror and vendor-lib0, with a CollaborativeEditor chunk that imports awareness and cursor from y-prosemirror.

Awareness — the protocol behind editor cursors and "is viewing" indicators — is multiplexed onto the same SyncWebSocket under a cmd: 'collabSession' envelope. Three message types share the channel: Yjs document state vectors, awareness updates, and an initial-state query. Awareness is ephemeral, never persisted, and tolerates eventual consistency, which is why it can run alongside the structured-data path without the conflict-resolution machinery that path requires. When a tab is hidden, a handleWindowHide listener clears the user's awareness state, so a colleague who Cmd-Tabs away stops appearing as a viewer. Most apps pick one religion. Linear pays for both, where each is cheap.

Splitting the sync engine into pieces

When the client first connects, it populates IndexedDB. Three bootstrap modes — full, partial, local — chosen by whether this is a first visit, a return with a stale local DB, or a return with an up-to-date one. A real captured bootstrap query from production:

GET https://client-api.linear.app/sync/bootstrap?type=full&onlyModels=

WorkflowState, IssueDraft, Initiative, ProjectMilestone, ProjectStatus,

TextDraft, ProjectUpdate, IssueLabel, ExternalUser, CustomView,

ViewPreferences, Roadmap, …and 46 more (notably absent: Issue, Comment,

which are lazy-hydrated on demand)The bootstrap protocol, on the wire

The response to that URL isn't a JSON document — it's NDJSON, one model per line, terminated by a _metadata_={...} envelope carrying lastSyncId, subscribedSyncGroups, and a per-model count. The streaming shape lets the client start parsing and writing into IndexedDB before the response finishes downloading, so cold-load time is bounded by the larger of network and CPU rather than their sum.

The metadata envelope also leaks an architectural detail: "method": "mongo". Behind the bootstrap endpoint is a MongoDB cache holding serialized model objects, sitting in front of Postgres. Linear tried Bigtable first; on the devtools.fm scaling-LSE episode, Tuomas notes Mongo turned out to be "three or four times faster" for this workload and they kept it. Memorystore Redis serves a different role — it's both the WebSocket pub/sub backbone for delta fan-out and a real-time cache, per Linear's own GCP customer-story post.

The protocol itself has been rewritten more than once. The original bootstrap was JSON-over-WebSocket; that was replaced with a GraphQL endpoint, which then choked under tens of thousands of catch-up packets because GraphQL waits for the full response before flushing. The current streaming-REST implementation is the third generation. A late-2024 optimization (splitToCacheableRequests) parallelizes the fetch across multiple smaller requests, each independently cacheable at Cloudflare's edge — turning a worst-case multi-megabyte cold load into a fan-out of warm hits.

How lazy hydration remembers what it fetched

After bootstrap, every "load this on demand" goes through a BatchModelLoader that buckets requests into three categories. Partial-index requests ("all comments for issue X") batch into a /sync/batch POST. Sync-group requests ("all models in this newly-joined team") trigger a partial bootstrap. Generic loads — the rare case — fall back to fetching whole models. After the response lands, the partial index is persisted to a <modelHash>_partial object store in IndexedDB, recording exactly which (model, indexedKey, value) triples have been fetched. The next time the app needs that slice, canSkipNetworkHydration returns true and the read goes straight to the local pool. The cache isn't just data; it's a memory of what's already been asked.

There's a second cleverness on top. PartialIndexHelper precomputes nested partial-index reachability up to three levels deep. An issue references a cycle; a comment references an issue; therefore a query of "all comments for issues in this cycle" walks the chain and resolves with a single round-trip. Most apps would do this with three sequential queries, or a custom server endpoint per nested case. Linear builds the chain client-side and asks the server one question.

There's also a separate REST endpoint, /sync/delta?lastSyncId=N&toSyncId=M, which returns SyncActions between two cursors. The WebSocket carries deltas in real time; /sync/delta is the catch-up path used when a client reconnects after a long enough gap that replaying via the socket would be expensive. The choice between them is implicit — the client picks the cheaper path based on the size of the lag.

Questions a careful reader will already be asking

Two users edit the same issue title at once — what happens? Linear handles this with transaction rebasing, not naive last-writer-wins. Each UpdateTransaction carries both the original and next value. When a remote delta arrives that changes the same field while your transaction is still pending, Linear updates the original field of your pending transaction to match — leaving your in-memory model showing your value. By the time your transaction ships, the server sees a mergeable LWW outcome, not a conflict.

What if the mutation gets rejected? The observable reverts, the local IndexedDB row was never written, and the user sees a brief flash followed by an error toast. Tuomas demoed exactly this in his React Helsinki 2020 talk, calling out the visible flicker as the cost. In production it almost never happens — most rejections are validation errors caught before the transaction is even created.

Permissions change mid-session? The server emits a G or S sync action. The client triggers a partial bootstrap for newly-visible sync groups, or deletes every locally-cached model tagged to a group it just left, before processing the next packet. The local IndexedDB never holds data the user isn't authorized to see.

Memory on a tab open for eight hours? It balloons, somewhat. A 50,000-issue workspace held in MobX is hundreds of megabytes; the bundle has a MemoryMonitor that surfaces usage. The local load strategy elides whole model classes from eager hydration, and lazy collections aren't rehydrated unless they're read. There's no LRU eviction in the object pool — Linear accepts the cost on large workspaces.

Why MobX, not Redux? Property-level reactivity. MobX's getter/setter model lets it know exactly which observers depend on which scalar. Redux's connect / useSelector subscribes a component to the entire store and re-runs the selector on every change — granularity comes from selector tuning by hand. Signals get closer but require explicit dependencies. MobX gets per-property subscriptions for free, which is the only way "50 deltas, 50 cell re-renders" holds without manual wiring at every component boundary.

Chapter 4: Rendering at 60fps

Only transform and opacity are fully composited — they animate on the GPU compositor without invoking layout or paint at all. (filter sometimes joins them, but only when the source element has already been promoted to its own layer; otherwise it triggers paint.) A second tier — color, background-color, border-color, fill — skips layout but still repaints, which is cheap on small targets and expensive on 5,000-row lists. Everything else (width, height, top, left, margin, padding) forces the layout engine to recompute the position of every subsequent element on the page.

Linear's bundle stays on the first two tiers — composited where possible, painted-but-not-laid-out where not — and never animates a layout-triggering property. Every transition, every hover state, every popover entrance stays off the layout engine.

/* What Linear does */

.row:hover {

background-color: var(--color-bg-hover);

transition: background-color 0.12s;

}

.icon-arrow {

transform: translateX(0);

transition: transform 0.15s;

}

.row:hover .icon-arrow {

transform: translateX(2px);

}/* What you'd write if you didn't know better */

.row:hover {

margin-left: 2px; /* triggers layout for every row beneath this one */

background: lightgray; /* fine on its own */

transition: all 0.2s; /* and now you're animating margin */

}The first version runs entirely on the GPU compositor. The CPU sets up the animation and walks away. The second version forces the browser to recompute the position of every row beneath the hovered one, on every frame, for the duration of the transition. On a 5,000-issue list, that is the difference between buttery and unusable.

.button { transition: background-color 0.08s; } /* 80ms press feedback */

.row:hover { transition: background-color 0.12s; } /* 120ms hover */

.icon { transition: opacity 0.15s, transform 0.15s; }

.dropdown { transition: opacity 0.2s, transform 0.2s; } /* 200ms compound */Most design systems default longer: Material's standard duration is 200ms, iOS's spring is closer to 350ms. Linear treats responsiveness as the dominant aesthetic and lets duration follow. Eighty milliseconds of press feedback registers as "responsive." Two hundred and fifty milliseconds — the short end of what most systems ship — registers as "laggy," because the brain has stopped associating the response with the click.

Why mounting 5,000 rows still works

This is deliberate. With per-property MobX observability, a mounted-but-offscreen row costs almost nothing on update — the observation graph is built once and stays. A windowing library would unmount and remount on every scroll, forcing MobX to rebuild the graph for each row each time. The bundle ships react-virtuoso and react-window for other surfaces (chat-like feeds, grids), but the issue list — the place where 50 cell re-renders need to be free — runs on Linear's own incremental renderer.

Reduced motion is a single toggle

@media (prefers-reduced-motion: reduce) {

*, *::before, *::after {

animation-duration: 0.01ms !important;

transition-duration: 0.01ms !important;

}

}Two rules collapse every transition in the bundle to zero. Performance and accessibility happen to want the same thing: less work for the browser, less motion for the user.

Why all is a code smell

transition: all 0.2s is the most innocent-looking footgun in CSS. It tells the browser to animate any property that changes. If a class change or media query later updates a layout property, the browser will animate it, and the animation will be a layout-triggering one. Linear's transitions name the exact properties they animate, every time. It looks more verbose. It removes an entire class of regression.

Chapter 5: The spinner is a choice

A spinner is not a fact about the world. It is a UI element someone added because the alternative (showing the user something useful) required more thought. Most of Linear's interactions contain no spinner anywhere. This is not because the underlying work is faster than in other apps. It is because Linear refused, repeatedly, at every interaction surface, to wait.

Cmd-K is a search over your local database

Open the command palette and start typing. Results appear before you finish the second letter. No debounce, no spinner, no "Searching..." state. The palette is not searching the server. It is running a fuzzy match over the MobX object pool that was already in memory before you opened it.

// approximate; based on bundle inspection

async function search(query: string): Promise<Result[]> {

// 5,000 fuzzy-ranked scores per keystroke, off the main thread.

const scored = await worker.scoreIssues(

query,

Array.from(issueStore.byId.values()),

);

return scored.slice(0, 50);

}For a 5,000-issue workspace this is ~5,000 fuzzy-ranked scores per keystroke. The bulk runs in a Comlink-wrapped Web Worker so the main thread stays at 60fps while a background core ranks results. (A handful of small filters — like the member picker — score on the main thread, since the cost is trivial below a few hundred items.) Results come back over postMessage in a few milliseconds.

The scorer itself is small enough to read. SearchScoreHelper in the bundle is a ~6 KB Smith-Waterman-style dynamic-programming match with an explicit penalty table:

Exact match → 1.0

Case-insensitive match → 0.9999

Word-boundary hit ×0.8 (chars: " ", "-", "_", ".", "/")

Whitespace/dash hit ×0.17

Skip-character penalty ×0.999^k (per intervening character)

Position-decay ×0.999^position

Word-order bonus ×1.12 (when query words appear in order)

Acronym fallback ×0.1

"Meaningful match" ×0.01 (no boundary, no substantial substring)Input is normalized: "Café" matches "cafe" (NFD-decompose and strip combining marks); "C&D" matches "c and d". A 2,000-entry LRU caches tokenized inputs. There's a 30-second pooled-array recycler, withSharedArray, to avoid allocations on every keystroke. Every place in the UI that nudges ranking — recently viewed, current team, current cycle — does so via prioritize(score, weight) (score * 1.01^weight) and deprioritize(score, weight) — multiplicative nudges, not re-scoring. This is what "build something simpler that we control" actually looks like at the code level: a hand-rolled, allocation-aware DP with a documented penalty table, sized to a budget of ~5,000 iterations per keystroke.

The architectural payoff: the entire app becomes a search box. Navigation is search. Issue creation is search. Status changes are search scoped to statuses. One UI primitive, used everywhere, running on data that is already local.

Inline editing means there is no destination

Click a status pill. A popover appears anchored to it, with a fuzzy filter input. Pick a new status. The popover dismisses. The status updates in place. There was no page transition, no modal, no destination — the difference between "I changed the status" and "I went somewhere to change the status, then came back." The popover is the same component that powers Cmd-K, scoped to the field being edited:

// One primitive, parameterized by what it's editing

<FuzzyPicker

source={statusStore.all}

initial={issue.status}

onPick={(next) => {

issue.status = next; // MobX observable update, UI re-renders

issue.save(); // queued mutation, flushed in the background

}}

/>The issue.save() is the same two-line pattern from Chapter 1. The user's mental model: "I clicked the pill, picked a status, done." The implementation matches.

Keyboard chords turn the app into a CLI

Single letters edit. Two-letter sequences navigate. Cmd modifiers act globally.

L Add a label to the focused issue

A Assign

S Change status

P Set priority

G then I Go to inbox

G then M Go to my issues

Cmd K Open command palette

Cmd J Open agent panel

Cmd Shift M Toggle themeThere is no command you do frequently in Linear that requires a mouse. Keyboard input is the lowest-latency path the browser provides: no hover delay, no click target, no Fitts's-law penalty. A user who learns ten shortcuts has effectively turned Linear into a CLI on a local database — about as fast an interface to application data as exists today.

The runtime, in three lanes

Linear's runtime splits across three contexts:

Main thread React, MobX, the SyncWebSocket, IndexedDB writes,

requestAnimationFrame batching.

Worker pool Search scoring, diff computation, AI inference,

(N - 1 cores) JSON normalization on the bootstrap stream.

Service worker HTML shell, PWA manifest, three font files. Skips

/graphql, skips /sync, skips everything dynamic.Anything CPU-bound goes to the worker pool. Search scoring on a 5,000-issue workspace doesn't block typing; AI inference doesn't block scrolling; JSON normalization on the bootstrap stream doesn't block the first paint. Three contexts, three responsibilities, no cross-coordination machinery.

What they did not build

Grep the bundle for SharedWorker or the Background Sync API and you'll come up empty. (navigator.locks is used for IndexedDB write coordination and BroadcastChannel for cross-tab settings sync — but neither carries the sync engine's data path; tabs still converge through the server.)

The simplest cross-tab sync model is "every tab opens its own WebSocket, every tab maintains its own MobX graph, the server is the rendezvous point." Tab A mutates an issue. The server fans the resulting SyncAction to every connected client, including Tab B. Tabs converge through the server, not through cross-tab IPC. The cost is bandwidth — N tabs, N WebSocket connections. The benefit is simplicity: no leader election, no SharedWorker quirks, no Safari edge cases, no Electron-versus-browser divergence. Linear chose to pay bandwidth to avoid coordination complexity. The Background Sync API gets the same treatment — Linear's transaction queue already handles offline replay, so it would add a dependency on browser cooperation Linear doesn't need.

The spinner is a choice. So is everything that prevents one.

Chapter 6 — The debt the team chose to keep paying

If you read the first five chapters looking for the one Linear technique to copy, you are going to come away frustrated. There isn't one. There are about thirty.

A local-first sync engine without granular MobX rendering still hitches on big lists. Granular rendering without a local database still waits on every network round-trip. Optimistic UI without a durable transaction queue loses writes when the user closes the tab. A transaction queue without rAF batching hitches the main thread on flush. Variable fonts without modulepreload still serialize on the network waterfall. Modulepreload without intent-based prefetch still pays a chunk-fetch cost on first navigation. A cache-first service worker without MobX granularity still re-renders the world on every state change.

Each technique alone would be fine. Pasted on top of an otherwise traditional app, it would buy a few percent. None of them, in isolation, would let you ship Linear.

The hundred-millisecond threshold

Stack them and something different happens. Below roughly 100 milliseconds, an interaction stops feeling caused and starts feeling identical. The user's intention and the screen's response collapse into a single perceived event. Above that threshold, they're separate: I clicked, and then it happened. Below, they fuse: I clicked it.

Most of Linear's interactions land below 100 milliseconds end to end. Not because any single piece is impossibly fast, but because the budget has nothing eating into it.

Traditional CRUD app, "change issue status" interaction:

Click handler ~5ms

Show spinner ~16ms (one frame)

POST /api/issues/:id ~120ms (network)

Server validation + DB write ~40ms

Response back to client ~80ms (network)

Reconcile state, re-render list ~30ms

Hide spinner ~16ms

-----------------------------------------------

Perceived latency ~307ms

Linear, same interaction:

Click handler ~2ms

Mutate MobX observable ~0.5ms

Re-render the one cell that depends on it ~3ms

Queue transaction (fire-and-forget) ~0.2ms

-----------------------------------------------

Perceived latency ~6msThe latency floor is not pushed down by one big optimization. It is the absence of a hundred small ones.

The server is wherever you are

The last decision in the stack is geographic. Linear's multi-region architecture replicates the entire stack per region rather than sharding the database. A user in Frankfurt hits a Frankfurt deployment; a Cloudflare Worker at the edge caches auth signatures so even token validation stays in-region. For an app that mostly resolves from local state the network is rarely consulted, and when it is, the server is close. A user in Tokyo and a user in Berlin both feel like the app is running on their machine, because effectively it is.

What this architecture costs

Nothing about this is free. The first cold load on a 50,000-issue workspace is genuinely slow — it's why partial bootstrap modes exist. Holding a workspace in MobX means each tab eats hundreds of megabytes of RAM. N open tabs means N WebSocket connections rather than a single SharedWorker-coordinated one — Linear deliberately pays the bandwidth to skip the coordination complexity. And the two native mobile codebases (Swift + Kotlin) cost real engineering headcount and slower feature parity than a webview wrapper would.

And the architecture has been stress-tested. On January 24, 2024, an internal database migration ran a TRUNCATE TABLE … CASCADE against a shared table; one hour of platform downtime followed, twelve percent of workspaces had data temporarily unavailable, and 4,136 sync packets were eventually unrecoverable (an average of 0.44 per affected workspace). The detection lag was 30 minutes — longer than it would have been in a server-rendered app, because clients were still happily reading from their local caches. The deletion at the database layer didn't generate sync packets, so clients didn't know anything had changed. Recovery was easier than it would have been in a stateless app: 99% of the lost data was restored within 36 hours by replaying sync actions on top of the 04:47 UTC backup. Then in March 2026, a different failure mode: a variable-shadowing bug in the access-control layer bypassed the sync-group permission filter for ~63 minutes, briefly leaking cross-team data. Recovery used a different primitive — instead of replaying packets, Linear bumped backendDatabaseVersion, which is the same kill-switch IndexedDB uses for schema migrations. Every client wiped its workspace database and re-bootstrapped from scratch. The architecture is not invincible. It is, however, recoverable — by two different mechanisms, against two different failure modes — in a way most architectures aren't.

There is one place the architecture stops trying. AI inference can't be made to feel instant: the round-trip to a frontier model is always longer than the perception threshold. Linear is honest about this. The Triage Intelligence post describes the agentic reasoning path as "fast compared to a human, but slower than Linear's usual deterministic systems." Cmd-J streams. The agent panel shows progress. The 100ms rule still holds for everything sync-engine-shaped, and AI is allowed to be the exception. The architectural claim is sharper for not pretending otherwise.

The optimistic part of the story

None of this is unavailable to you. The platform has the parts: IndexedDB, MobX (or Zustand, or signals), variable fonts, modulepreload, service workers, web workers, Comlink, Cloudflare Workers.

The question is not whether you can build something this fast. The question is whether you will spend a year doing it.

The catch isn't any single decision. It's that you have to make all of them, in roughly the right order, and keep making them as the codebase grows. There is no version of this work where Linear shipped fast, then stopped. Every quarter someone on that team rewrites a bundler, audits a transition duration, redesigns a popover so it doesn't trigger layout, refactors a model so its observable graph is one observer narrower. The application's speed is not a foundation laid in 2019. It is a debt the team chose to keep paying. The rest of us pay a different debt instead.

Sources

Linear has published more about its own engineering than most companies its size. The most useful primary sources, in rough order of depth:

Tuomas Artman — Local-First Conf 2024. The most detailed public account of the sync engine, with the "two CPU cores in Europe for 80 bucks a month" line and the local-strategy explanation.

wzhudev — reverse-linear-sync-engine. CTO-endorsed ("probably the best documentation that exists — internally or externally"). The chapter on transactions is the basis for most of Chapter 3 of this article.

Linear — Scaling the Linear Sync Engine and the multi-region post. The two engineering posts that go furthest into the actual architecture.

Karri Saarinen — Why is quality so rare? and Tuomas Artman — Quality Wednesdays. The non-technical companion: how the team's process produces the architecture.

Kenneth Skovhus — ViteConf 2025 and the Vite 8 release notes. The bundler arc, with the 46s → 6s number.

Linear — Jan 24 2024 incident post-mortem and the March 24 2026 post-mortem. The architecture's two highest-profile failure modes, both well-handled.

marknotfound and fujimon. Two more independent reverse-engineering writeups — useful when wzhudev's gets ahead of itself.